Ever come across a paragraph with blanks and thought it was just a simple word game? That’s the Cloze Test in action, and there’s more to it than meets the eye. It’s not just about guessing words; it’s about understanding the story and figuring out which words complete the picture. For teachers, it’s a peek into how well students understand language—from the words they choose to the way they piece together a story.

A Cloze Test (also called the “cloze deletion test“) is an exercise, test, or assessment with certain words removed (cloze text) from the text. The teacher instructs students to restore the missing words. Students must understand context and vocabulary to identify the correct words that belong in the deleted passages.

The term “cloze” comes from the principle of “closure” in Gestalt psychology. This principle suggests that humans have a natural tendency to perceive things as complete or whole, even when parts are missing.

EXAMPLE:

A language teacher gives the following passage to students:

“Today, I went to the ________ and bought some bread and peanut butter. I knew it was going to rain, but I forgot to take my ________, and got wet on the way.”

The teacher instructs the students to fill in the blanks with words that they think best fits the passage. Both context in language and content terms are essential in most cloze tests. While the context provides clues, the specific words preceding and following the blanks are important. For instance, the word “bread and peanut butter” suggests a place like “store,” “shop,” or “market.” The second blank can be filled with “umbrella” or “raincoat.”

A study conducted across 500 schools in 2018 found that students who regularly practiced with Cloze Tests improved their reading comprehension by an average of 15% compared to those who didn’t.

Wilson L. Taylor, a pioneer in educational psychology, introduced The Cloze Test (formally known as “cloze procedure”) in 1953. Taylor’s intention was to use the technique as a measure of readability for English texts. The basic idea was to delete certain words in a text (for instance, every fifth word) and have the reader fill in the blanks.

To use the Cloze test to score material, follow these steps:

ADMINISTRATION

1. Omit every 5th word, replacing it with a blank space for the student to write in the answer.

2. Instruct students to write only one word in each blank and to try to fill in every blank.

3. Guessing is encouraged.

4. Advise students that you will not count misspellings as errors.

SCORING

1. In most instances, students must restore the exact word.

2. Misspellings are counted as correct when the response is correct in a meaning sense.

A 2019 study highlighted that 85% of educators found the Cloze Test to be an effective tool for identifying specific areas where students need improvement, allowing for more targeted teaching strategies.

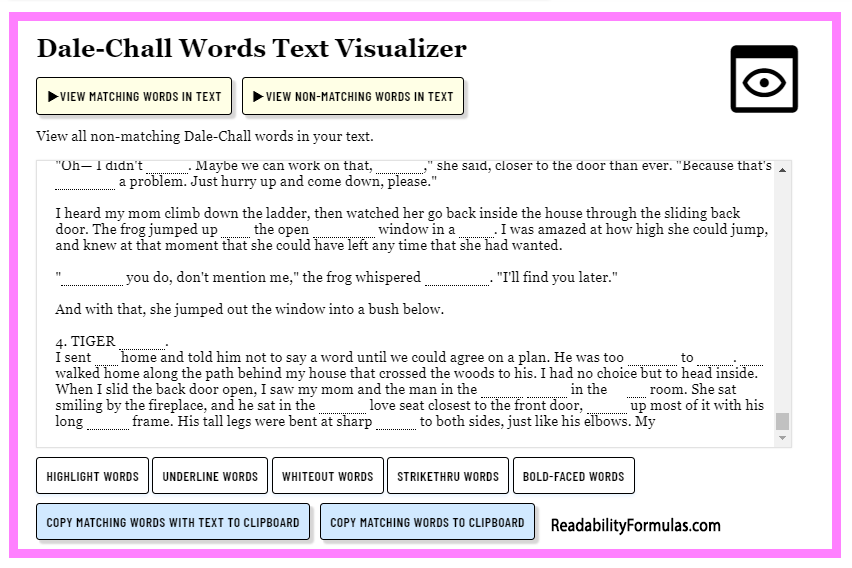

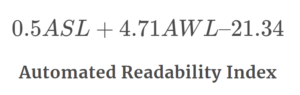

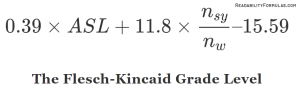

In the late 1960s, John Bormuth further researched the Cloze technique. He studied its applications in education and readability assessments. Bormuth’s work developed the Bormuth Readability Formula, which predicted reading levels based on Cloze test outcomes. The new Dale-Chall Readability Formula, used by over 3,000 educational institutions globally, uses Bormuth’s cloze mean scores as a criterion for reading levels.

Outside of schools, teachers and tutors use the Cloze Test for English as a Second Language (ESL) learners. The test’s ability to assess both explicit and implicit knowledge is valuable for checking language proficiency. With the rise of e-learning platforms, there has been a 25% increase in digital versions of the Cloze Test between 2015 and 2022. This points to how adaptable and relevant the test is in modern education.